News

Members

Publications

Software / Data

Job offers

Images / Videos

Collaborations

Conferences

Lab meetings: "Les partages de midi"

Practical information

Members Area

Next conferences we are in …

Since 2000's, the use of robotic assistance for laparoscopic interventions is continuously growing. In this context, the da Vinci robot from Intuitive Surgical is the only available teleoperation platform certified for the operating room. A recent report from ECRI highlights more than 570,000 worldwide procedures were performed using the da Vinci in 2014, which is up to 178% compared to 2009. This system helps the surgeon by improving perception, dexterity and comfort during manipulation, allowing robotic surgery to become nowadays a standard procedure in gynecology, general surgery and urology. However, French healthcare agencies Agence Nationale de Sécurité du Médicament et des produits de Santé and Haute Autorité de Santé noticed that serious adverse events could appeared during robotic surgeries. Even if technical issues were notified because of the robot technology, surgeons' expertises are mainly pointed out. For this purpose, different robotic systems are available to train technical skills, such as the dV-Trainer from Mimic Technologies or the da Vinci Skills Simulator. These systems provide multiple reproducible scenarios and automatic evaluation of the surgical tasks. Nevertheless, the main drawback of those training systems is the global evaluation of the training task only, which is not precise and informative enough for the trainee.

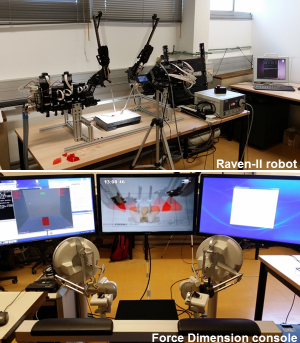

To improve the robotic training efficiency, our project is focusing on two objectives. The first one is to recognize surgical gestures. For this purpose, we proposed a novel approach for the unsupervised segmentation and recognition of surgical gestures in robotic training that does not rely on any statistical or probabilistic model. In this work, multiple experts were asked to perform a pick-and-place training task using the Raven-II robot, available at the LIRMM lab (this robot closely mimics the da Vinci). From surgical robotic tool trajectories, we segment the different signals into surgical primitives, called dexemes, and use these primitives to learn and retrieve the entire surgical gestures, called surgemes. Our approach is then composed of two steps : the unsupervised segmentation and the recognition . Based on this novel approach, we are able to detect surgemes at 77.5% and reach a temporal matching of 81,9% between the manual annotations and the detections. Using those detections, our second objective is to provide in-depth evaluation of the surgical robotic task in order to efficiently (i.e. locally) evaluate the trainee performance through dedicated metrics.

To improve the robotic training efficiency, our project is focusing on two objectives. The first one is to recognize surgical gestures. For this purpose, we proposed a novel approach for the unsupervised segmentation and recognition of surgical gestures in robotic training that does not rely on any statistical or probabilistic model. In this work, multiple experts were asked to perform a pick-and-place training task using the Raven-II robot, available at the LIRMM lab (this robot closely mimics the da Vinci). From surgical robotic tool trajectories, we segment the different signals into surgical primitives, called dexemes, and use these primitives to learn and retrieve the entire surgical gestures, called surgemes. Our approach is then composed of two steps : the unsupervised segmentation and the recognition . Based on this novel approach, we are able to detect surgemes at 77.5% and reach a temporal matching of 81,9% between the manual annotations and the detections. Using those detections, our second objective is to provide in-depth evaluation of the surgical robotic task in order to efficiently (i.e. locally) evaluate the trainee performance through dedicated metrics.